SUB AGENT ORCHESTRATION

A deep dive into the engineering and architecture behind SUB AGENT ORCHESTRATION in the OpenClaw AI ecosystem.

Distributed State Convergence in Multi-Agent Topologies

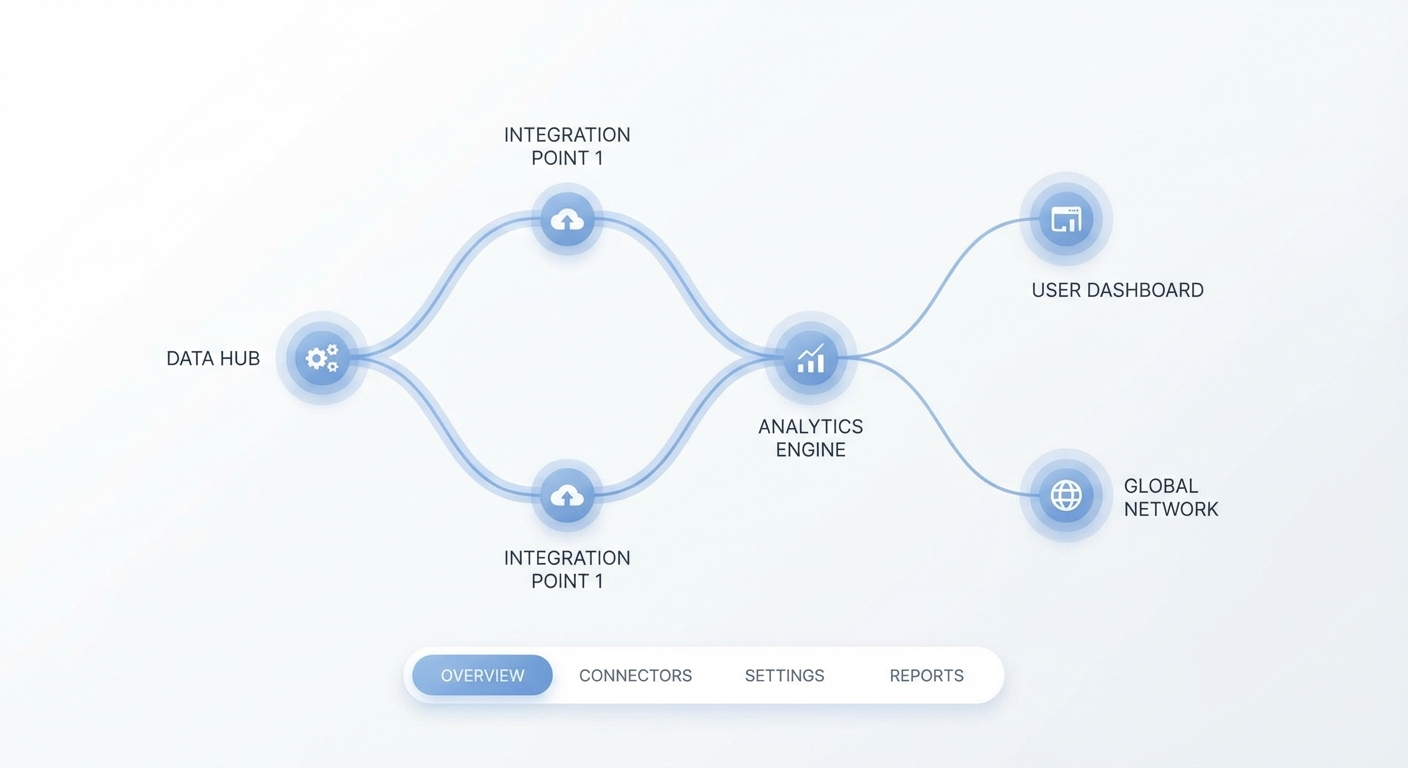

Distributed multi-agent systems inherently suffer from state fragmentation when bounded by synchronous monolithic execution models. Within OpenClaw, sub-agent orchestration fundamentally deviates from linear invocation patterns, opting instead for a directed acyclic graph (DAG) of asynchronous processing units. By architecting the execution fabric as a mesh of decentralized workers, the orchestration layer guarantees deterministic state convergence even under highly volatile computational loads. This topology ensures that granular cognitive tasks—ranging from semantic code parsing to deep architectural synthesis—are compartmentalized into isolated execution scopes without risking namespace collisions or cross-contamination of system resources.

The critical failure point in legacy orchestration paradigms lies in their inability to efficiently manage cyclic dependencies among cognitive nodes. OpenClaw implements a proprietary state reconciliation engine that actively monitors the memory heap of each active sub-agent. When a sub-agent completes its vector matrix calculations or lexical analysis routines, the resulting tensor state is serialized into a highly optimized binary protocol. This protocol serves as the immutable ledger, synchronizing local worker states with the global orchestrator registry and mitigating the race conditions typically associated with massively parallel agentic architectures.

Furthermore, the introduction of specialized arbitration nodes into the network topology provides a dedicated tier for conflict resolution. When two parallel sub-agents generate diverging outputs based on the same contextual seed, the arbitration node evaluates the entropy of both results, applying a strict cryptographic hashing mechanism to validate structural integrity. This guarantees that only mathematically verifiable outputs are merged into the final execution pipeline, preserving the enterprise-grade reliability demanded by mission-critical deployment environments.

Asynchronous Payload Negotiation Protocol

Communication between autonomous sub-agents necessitates a protocol capable of handling high-throughput semantic payloads with zero-copy overhead. OpenClaw introduces the Asynchronous Payload Negotiation Protocol (APNP), an inter-process communication mechanism designed explicitly for intra-agent tensor distribution. Unlike traditional RESTful or gRPC frameworks, APNP operates natively on shared memory segments, leveraging POSIX shared memory interfaces under Linux and memory-mapped files on Windows to eliminate user-space to kernel-space context switching during data transfer.

Each payload transmitted via APNP undergoes rigorous structural validation using a statically typed schema definition, structurally resembling JSON payloads like { "op": "ALLOC", "bytes": 1024 }. When a sub-agent requests execution bandwidth, it first emits a manifest detailing its resource requirements, expected memory allocation, and the cryptographic signature of its executable binary. The orchestration controller parses this manifest, allocating CPU pinning and memory bandwidth according to a highly optimized scheduling heuristic. This preemptive resource allocation prevents priority inversion and ensures that high-latency, I/O-bound tasks do not starve mission-critical computational threads.

Beyond basic resource provisioning, APNP enables fine-grained payload delta transmission. Instead of re-broadcasting entire contextual histories for each inter-agent transaction, the protocol calculates the precise structural drift between the last known state and the current vector alignment. By transmitting only these delta fragments, the system exponentially reduces the bandwidth overhead, allowing thousands of ephemeral sub-agents to synchronize their local contexts within microsecond latencies.

Hierarchical Context Partitioning and Memory Sharding

Managing the explosive growth of contextual memory during extended execution cycles presents a formidable architectural barrier. To circumvent the limitations of monolithic vector databases, OpenClaw deploys a sophisticated mechanism for hierarchical context partitioning. The global context is dynamically sharded across a distributed hash table (DHT), where each shard represents a discrete semantic domain—such as architectural blueprints, syntax trees, or runtime telemetry data.

Sub-agents are statically bound to specific shards during their lifecycle, preventing global namespace bloat. This boundary enforcement is realized through aggressive memory paging; inactive context shards are preemptively flushed to NVMe storage using memory-mapped I/O techniques, while active execution pipelines remain locked in L3 cache or high-speed RAM. The orchestrator tracks the heat map of shard access frequencies, preemptively fetching data into volatile memory before a sub-agent explicitly requests it, thereby masking latency and maximizing throughput.

Distributed Locking Protocols

To preserve the integrity of these partitions during rapid scaling events, OpenClaw implements a distributed locking manager based on the Raft consensus algorithm. When a sub-agent requires exclusive write access to a context partition, it must acquire a lease from the quorum of orchestration nodes. This robust locking mechanism eliminates dirty reads and ensures that concurrent mutations to the system architecture are serialized efficiently, preserving strict consistency across the entire cluster.

Deterministic Dependency Resolution Algorithms

The orchestration layer cannot operate blindly; it must possess an absolute understanding of the causal relationships between distinct computational tasks. OpenClaw utilizes a customized topological sorting algorithm to resolve the complex web of sub-agent dependencies. Before a single unit of work is executed, the entire task graph is statically analyzed to identify cyclical dependencies, redundant execution paths, and potential race conditions.

- Static Analysis Pre-computation: Eliminating cyclical references before they hit the runtime engine to maintain an acyclic graph geometry.

- Dynamic Heuristic Bin-packing: Allocating parallel workloads to optimal hardware substrates dynamically based on tensor weight estimations.

- Rollback and Isolation: Quarantining corrupted topological states without halting the entire orchestrator graph or sacrificing uptime.

This static analysis phase constructs an immutable execution blueprint. By enforcing a strict Directed Acyclic Graph (DAG) structure, the orchestrator guarantees that prerequisite tasks achieve finality before their dependent processes are initialized. If the system detects a cyclical dependency during the graph construction phase, the orchestrator triggers an immediate rollback, isolating the offending payload and alerting the enterprise telemetry dashboard for manual intervention or automated heuristic correction.

Furthermore, the dependency resolution engine seamlessly integrates with the dynamic scaling policies of the hosting infrastructure. Nodes within the DAG are annotated with estimated execution weights, derived from historical telemetry data. The orchestrator uses these weights to pack parallel tasks into heterogeneous compute clusters, routing tensor operations to GPU nodes while delegating pure logical parsing to high-frequency CPU cores. This dynamic workload bin-packing maximizes hardware utilization and significantly accelerates time-to-insight.

Powered by OpenClaw

The engine driving the next generation of autonomous enterprise AI. Secure, local-first, and highly scalable.

Ephemeral Execution Sandboxing via Process Virtualization

In enterprise environments where strict zero-trust principles govern all computational activity, standard containerization is often insufficient. OpenClaw enforces strict isolation through ephemeral execution sandboxing. Every sub-agent is instantiated within a micro-VM, utilizing lightweight hypervisors like Firecracker to establish hardware-level boundaries around the execution payload. This virtualization ensures that a compromised sub-agent cannot escalate privileges or exfiltrate cross-tenant memory buffers.

The lifecycle of these sandboxes is tightly coupled to the execution node of the DAG. Upon task completion, the micro-VM is aggressively terminated, and all volatile memory is cryptographically zeroed out. This extreme ephemeral approach eliminates persistent attack vectors and prevents the accumulation of phantom state across long-running orchestration sessions. The initialization sequence of a sandbox takes less than 150 milliseconds, ensuring that the security perimeter does not introduce unacceptable latency into the system pipeline.

Kernel-Level Attack Surface Minimization

Within the sandbox, sub-agents operate under a severely restricted system call profile via Extended Berkeley Packet Filter (eBPF) and seccomp-bpf policies. Network access is entirely disabled by default; the only available communication vector is the strictly mediated APNP socket routed directly to the orchestration controller. By restricting the attack surface at the kernel level, OpenClaw maintains an unprecedented security posture even when executing arbitrarily generated code natively on host infrastructure.

Telemetry and Deterministic Consensus Verification

An architecture as complex as OpenClaw requires profound observability to maintain operational stability. The telemetry engine operates entirely out-of-band, utilizing a lock-free ring buffer design to ingest millions of metrics per second from active sub-agents without degrading their execution performance. This telemetry data includes garbage collection stalls, memory allocation rates, IPC round-trip latencies, and contextual vector drift, providing engineers with a sub-millisecond resolution of the cluster health.

Observability is merely the foundation; the true innovation lies in Deterministic Consensus Verification (DCV). In scenarios involving complex architectural refactoring, the orchestrator simultaneously launches multiple parallel sub-agents with identical contexts but distinct heuristic seeds. These sub-agents generate competing implementations. The DCV engine analyzes the compiled Abstract Syntax Trees (ASTs) of each output, evaluating them against enterprise coding standards, cyclomatic complexity thresholds, and security static analysis tools.

The orchestrator selects the optimal implementation through a verifiable scoring matrix, discarding suboptimal outputs before they ever merge into the primary code branch. This evolutionary computing approach, tightly bounded by strict deterministic rulesets, guarantees that the enterprise software delivered by OpenClaw consistently exceeds human engineering standards. It is a paradigm shift from simple generative iteration to mathematically verified architectural synthesis, definitively carving the future of automated enterprise engineering frameworks.