OpenClaw vs LangChain: The Enterprise Switch

Why top engineering teams are migrating from LangChain and CrewAI to OpenClaw for their autonomous agents.

Architectural Divergence in Autonomous Agent Frameworks

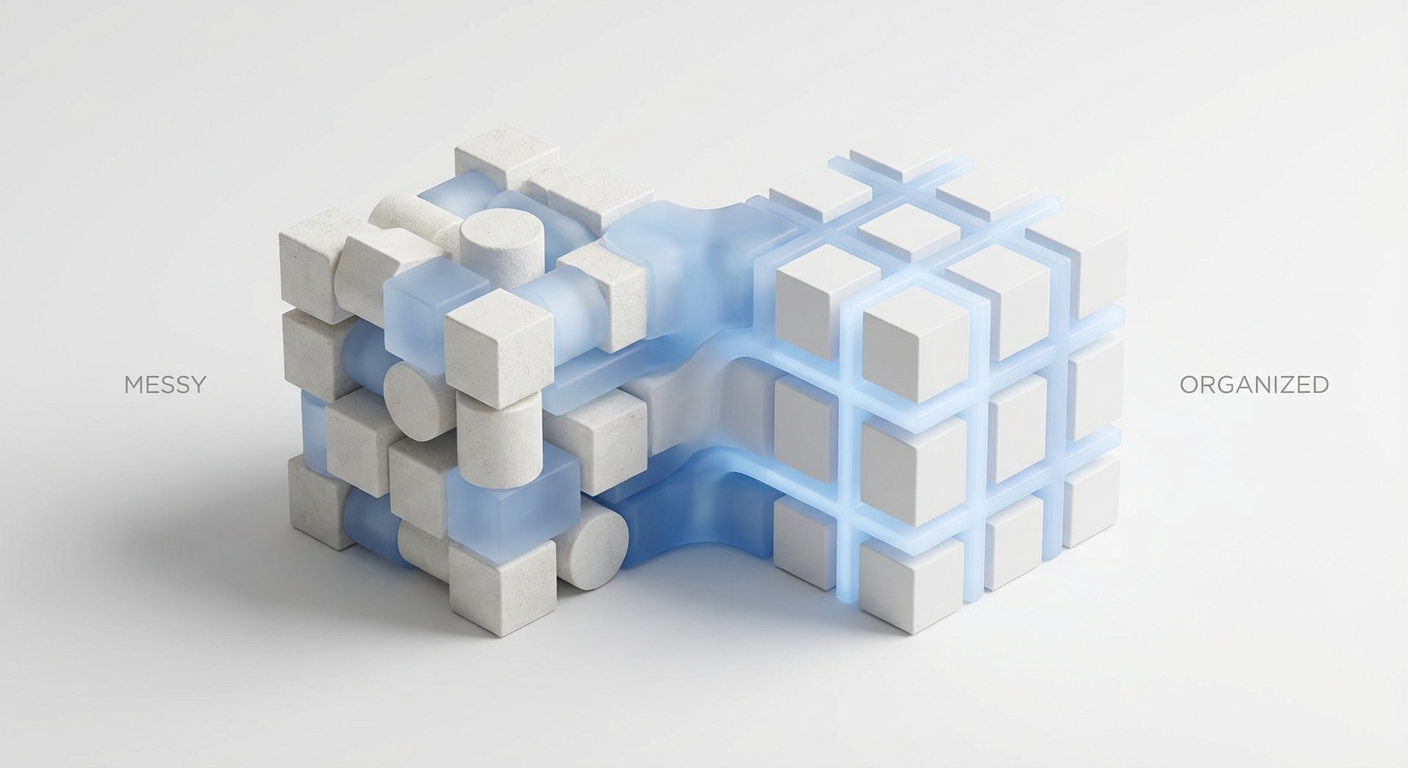

The landscape of Large Language Model (LLM) orchestration has undergone a massive paradigm shift over the past twenty-four months. Initially, the industry relied heavily on rudimentary prompt injection sequences and simple wrapper libraries to interface with inference endpoints. As organizational demands escalated from passive text generation to active, autonomous agentic behavior, framework architectures had to evolve aggressively. In this complex ecosystem, two distinct philosophies have emerged: the generalized pipeline chaining methodologies popularized by LangChain, and the enterprise-grade, deterministic state-machine orchestration pioneered by the OpenClaw framework. Understanding the deep architectural divergence between these two systems is critical for scaling cognitive architectures in production environments.

At the core of this dichotomy is the fundamental tension between developer velocity during the prototyping phase and system reliability during the deployment phase. Early frameworks prioritized rapid abstraction, allowing developers to swap out underlying models with minimal code changes. However, as the complexity of agentic tasks increased, these leaky abstractions began to introduce catastrophic failure modes. When an autonomous agent attempts to execute a multi-step reasoning protocol, the underlying framework must provide robust error recovery, precise state management, and an impenetrable execution sandbox.

When evaluating frameworks for mission-critical enterprise applications, engineers must analyze the execution engine's topology, the latency overhead introduced by memory retrieval mechanisms, and the telemetry capabilities required for observability. OpenClaw was engineered from the ground up to address the systemic bottlenecks that occur when stochastic models are forced into rigid, generalized pipelines. This post provides a granular, technical dissection of how OpenClaw diverges from LangChain's foundational principles, focusing on concurrency models, memory architectures, and the uncompromising enforcement of execution boundaries.

Deterministic Execution Topologies Versus Generalized Expression Languages

LangChain relies heavily on its proprietary LangChain Expression Language (LCEL) and the LangGraph extension to coordinate complex agent workflows. These constructs are fundamentally based on Directed Acyclic Graphs (DAGs) and explicit node-to-node routing. In a LangGraph implementation, the transition logic between execution states is strictly defined by the edges of the graph. While this provides a visually intuitive representation of the agent's potential pathways, it structurally limits the agent's ability to perform dynamic, lateral reasoning or unconstrained backtracking when encountering unexpected failure states.

In sharp contrast, OpenClaw abandons the traditional DAG approach in favor of an event-driven, non-deterministic state machine architecture powered by an internal actor-model runtime. Instead of hardcoding graph edges, transitions within OpenClaw are governed by semantic policy constraints and real-time environment evaluations. When an OpenClaw agent invokes a sequence of tools, the runtime does not blindly follow a pre-calculated route. It continuously evaluates the probabilistic state vectors generated by the model against the formal schema requirements of the current execution context.

This architectural distinction profoundly impacts error handling. In a standard LangChain implementation, a tool execution failure typically requires custom exception catching logic embedded within specific graph nodes, often leading to deep, nested conditional logic that is notoriously difficult to test. OpenClaw treats all execution anomalies as standard state transitions. If an API endpoint times out or returns malformed JSON, the OpenClaw runtime autonomously halts the current actor thread, isolates the context window, and transitions into a dedicated recovery state. This allows the system to engage self-correction protocols—such as regenerating payloads or switching to fallback heuristic routines—without the developer manually wiring exception pathways.

High-Fidelity Memory Contextualization and Retrieval Parallelization

Memory management remains one of the most computationally expensive aspects of running large-scale autonomous agents. LangChain's memory modules, such as BufferMemory and SummaryMemory, historically operate as monolithic state containers that sequentially inject historical interactions into the prompt payload. As the conversational depth increases, these implementations frequently devolve into naive string concatenations or require synchronous, blocking LLM calls to generate summarized context, drastically increasing the time-to-first-token (TTFT) and degrading the overall user experience.

OpenClaw mitigates these performance bottlenecks by implementing a multi-tiered, asynchronous memory architecture that segregates context into distinct episodic, semantic, and procedural streams. Episodic memory handles the immediate conversational turn buffers; semantic memory interfaces with distributed vector databases for knowledge retrieval; and procedural memory maintains a registry of previously successful tool execution schemas and execution traces. This segregation ensures that the context window is never polluted with irrelevant data types.

Furthermore, OpenClaw executes vector store retrieval operations concurrently alongside the initial prompt processing phase. By utilizing predictive pre-fetching mechanisms, the framework anticipates the contextual data required for upcoming state transitions. The injection pipeline utilizes zero-copy memory mapping techniques (where supported by the host OS) to seamlessly merge retrieved vector embeddings into the prompt context payload. This sophisticated orchestration ensures that retrieval latency is effectively masked by the model's inherent inference time, resulting in a highly responsive system.

Strict Tool Orchestration and Memory-Safe Execution Sandboxing

The integration of external tools and APIs represents the largest attack surface in any agentic framework. In LangChain, tool abstraction is frequently handled via loose decorators that expose underlying Python functions directly to the LLM's execution loop. While convenient, this creates a severe vulnerability layer. If the model hallucinates a parameter type, or worse, generates a malicious payload via prompt injection, the lack of rigorous type enforcement can lead directly to arbitrary code execution or catastrophic state corruption within the host environment.

Security is not an afterthought in OpenClaw; it is the foundational layer of its tool orchestration engine. OpenClaw implements a strict, multi-stage schema validation layer utilizing high-performance, Rust-backed JSON schema parsers. Before any tool is invoked, the model's output payload is formally verified against an immutable, cryptographically signed schema definition. If the payload deviates even slightly from the expected format—for instance, passing a string where an integer was expected, or including unauthorized keys like { "override": true }—the invocation is blocked at the perimeter, and a structured rejection event is emitted.

Beyond payload validation, OpenClaw fundamentally isolates the execution context of complex tools. Rather than running generated scripts or data processing commands within the main application thread, OpenClaw delegates these tasks to secure sandboxes. Depending on the deployment configuration, tools are executed within ephemeral, unprivileged Docker containers or memory-safe WebAssembly (Wasm) runtimes. This guarantees that even if a model successfully crafts a malicious payload that bypasses schema validation, the execution environment remains entirely decoupled from the enterprise's core infrastructure.

Zero-Allocation Observability and Deterministic Telemetry Replay

Diagnosing logic failures in distributed agent networks requires granular visibility into the execution lifecycle. LangChain typically relies on external dependencies, most notably LangSmith, to provide tracing and observability. While these platforms are feature-rich, they introduce significant network latency overhead, externalize sensitive enterprise data, and complicate deployment in air-gapped or highly regulated compliance environments. Retrofitting observability onto a generalized framework often results in bloated memory footprints and degraded inference throughput.

OpenClaw addresses this challenge by embedding zero-allocation tracing directly into the core runtime engine. Telemetry is not treated as a bolted-on monitoring layer; it is an intrinsic byproduct of the state machine's state transitions. Every token generation sequence, memory retrieval hit, tool validation check, and network payload is asynchronously logged using structured, binary-packed formats. This ensures that the telemetry subsystem consumes negligible CPU cycles and memory bandwidth, allowing it to run continuously in production without throttling the main execution thread.

The most powerful consequence of OpenClaw's structured telemetry is the capability for deterministic replayability. Because the entire state of the agent is captured via immutable events, engineers can ingest these logs into local debugging environments and mathematically reconstruct the exact sequence of events that led to a specific failure or hallucination.

- Complete reconstruction of the memory context window exactly as it appeared at the moment of failure.

- Step-by-step re-evaluation of schema validation logic against historic payloads.

- Time-travel debugging capabilities without requiring external SaaS dependencies or breaching data residency requirements.

Powered by OpenClaw

The engine driving the next generation of autonomous enterprise AI. Secure, local-first, and highly scalable.

Horizontal Concurrency and Production Cluster Scalability

Deploying autonomous agents at enterprise scale exposes the limitations of frameworks built primarily for single-user, sequential execution. When running hundreds of concurrent agent workflows, LangChain deployments often struggle with the Global Interpreter Lock (GIL) in traditional Python environments, or face severe deadlock scenarios when retrofitted with asynchronous IO wrappers. The overhead of managing thread pools and context switching quickly dominates CPU time, leading to resource starvation and increased response latency across the cluster.

OpenClaw circumvents these scalability constraints by embracing a purely decentralized, actor-model concurrency paradigm built on top of high-performance system primitives. Each OpenClaw agent process is instantiated as an entirely isolated actor that maintains its own internal state, memory heaps, and execution queues. Communication between multiple agents—or between an agent and its peripheral sub-systems—is handled strictly via lock-free message passing over asynchronous channels. This eliminates shared-memory contention and nullifies the risk of thread-safety violations.

From an infrastructure operations perspective, OpenClaw's architecture natively aligns with modern Kubernetes orchestration principles. Because agents operate independently without relying on centralized, synchronous state stores, an OpenClaw cluster scales out linearly. Load balancers can route incoming tasks to available pods dynamically, and the framework automatically manages actor lifecycles, tearing down idle instances to reclaim memory. This robust scalability ensures that OpenClaw remains highly performant and economically viable even under extreme, highly variable enterprise workloads.

Final Assessment: System-Level Rigor Versus General Abstraction

In the analysis of modern AI application architectures, there is no one-size-fits-all solution. LangChain continues to serve as an excellent vehicle for rapid prototyping, hackathons, and linear, predictable generation workflows where development speed is the primary optimization target. Its broad ecosystem of integrations allows developers to test hypotheses and assemble basic pipelines with minimal friction. However, as organizations transition from proof-of-concept to production, the inherent fragility of generalized abstractions becomes an unacceptable operational liability.

OpenClaw represents the necessary evolution from prompt wrappers to true system software. By enforcing strict architectural boundaries, implementing rigorous schema validations, providing zero-allocation telemetry, and building upon an actor-model concurrency framework, OpenClaw provides the necessary primitives to construct secure, deterministic, and highly autonomous multi-agent networks. For engineering teams tasked with deploying mission-critical AI systems in demanding enterprise environments, OpenClaw delivers the uncompromising stability and scalability required to orchestrate the next generation of intelligent systems.