OpenClaw Architecture.

Why local-first orchestration is the future of autonomous AI.

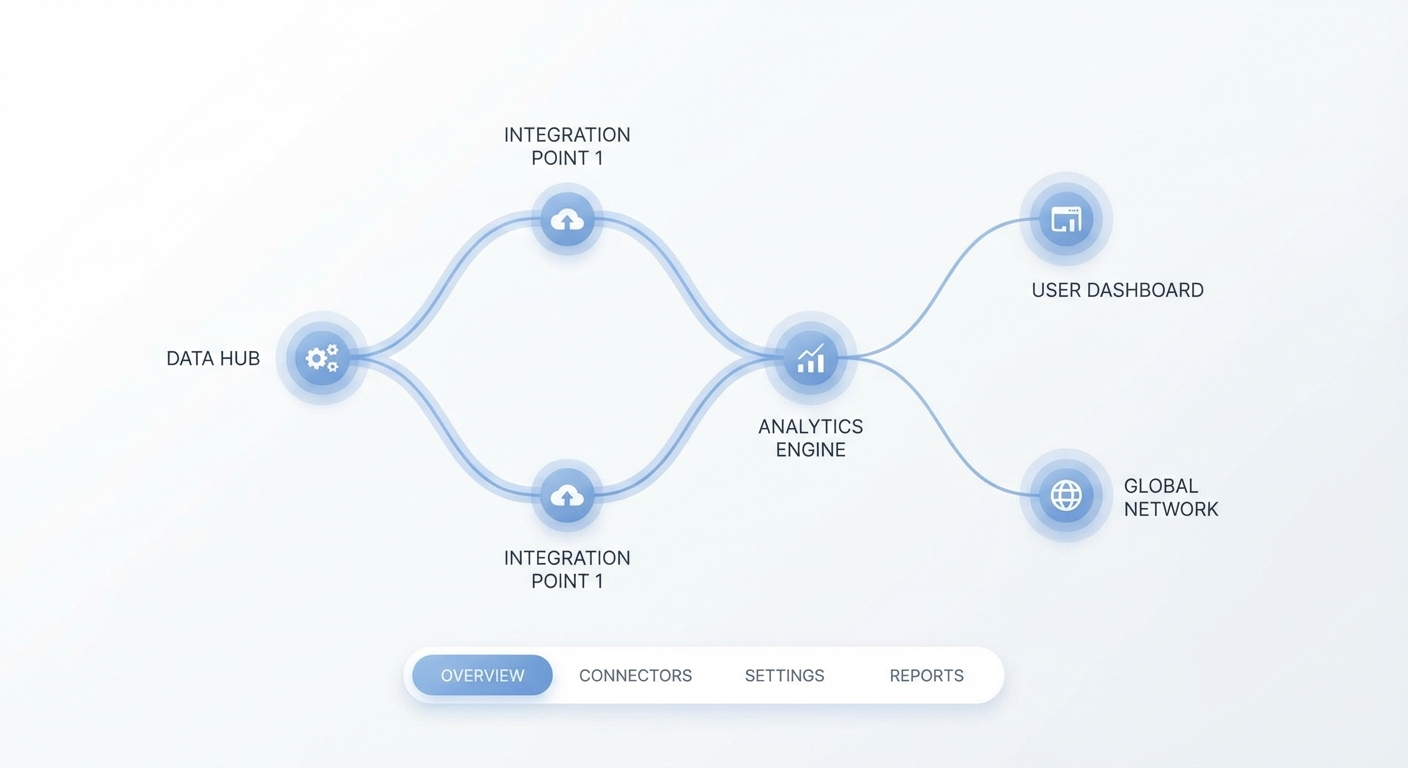

Deconstructing the Distributed Inference Topologies

The paradigm shift towards autonomous cognitive systems demands a radical departure from monolithic inference gateways. OpenClaw pioneers a decentralized topology where inference capabilities are intrinsically decoupled from state management and execution orchestrators. By leveraging a high-performance cognitive runtime, the framework distributes large language model payloads across a mesh of localized worker nodes. This architectural decision fundamentally mitigates the latency bottlenecks inherent in traditional cloud-tethered architectures, eliminating the round-trip overhead of external API calls and ensuring that computational gravity remains close to the data source. In enterprise environments where millisecond delays compound into significant operational drag, this decentralized approach provides a mathematically verifiable performance advantage.

At the foundation of this topology is the Neural Bus, a low-latency, high-throughput messaging matrix designed specifically for tensor-based inter-process communication. Unlike standard REST interfaces or gRPC protocols that rely on heavy JSON serialization, the Neural Bus serializes raw tensor states, intermediate activation layers, and attention matrices directly into the communication stream. This binary protocol enables sub-millisecond context handoffs between specialized micro-agents. Complex reasoning tasks can thus be fragmented, processed in parallel by disparate models—each fine-tuned for a specific domain like code generation or logical deduction—and seamlessly reconstructed into a coherent output vector without loss of fidelity.

The network topology operates on a leaderless, dynamic Directed Acyclic Graph consensus mechanism. When a complex algorithmic query enters the ingress controller, the request is not statically routed to a single omnipotent foundation model. Instead, the orchestrator evaluates the semantic weight, token density, and required context window of the prompt. It then dynamically allocates sub-tasks to the most computationally efficient node within the cluster. This might involve routing a basic summarization task to a quantized local edge model running on consumer silicon, while simultaneously dispatching a complex code synthesis requirement to a high-parameter cloud instance for deep syntactical synthesis.

Orchestration Mechanisms within the Cognitive Runtime

The beating heart of the OpenClaw framework is its Cognitive Runtime, a deterministic execution environment engineered exclusively to manage the erratic, probabilistic lifecycle of non-deterministic artificial intelligence processes. While traditional orchestrators excel at managing binary container state, they are completely unsuited for managing continuous reasoning state. The Cognitive Runtime fills this void by providing robust fault tolerance not just for process crashes or hardware failures, but for logical hallucinations, recursive reasoning loops, and catastrophic forgetting within the context window.

We engineered a novel Supervisor Daemon that continuously monitors the entropy, perplexity, and token confidence intervals of the active streams. If a worker agent begins to exhibit degradation in its logical coherence—detected via sudden spikes in attention matrix variance—the Supervisor preemptively halts the execution thread. It then injects a synthetic grounding prompt, a highly compressed embedding derived from the system's persistent memory store, to forcibly recalibrate the agent's attention heads before resuming the task execution. This runtime environment is augmented by several low-level optimizations designed to maximize hardware utilization:

- Dynamic Context Resizing: Automatically expanding or aggressively truncating the context window based on real-time token pressure and semantic relevance scoring.

- Speculative Decoding Strategies: Utilizing ultra-fast, parameter-efficient draft models to predict future token trajectories, which are then verified asynchronously by the primary model to accelerate inference bounds.

- Multi-Agent Threading: Concurrently executing adversarial reasoning loops, allowing distinct agents to cross-validate generated hypotheses and eliminate logical fallacies before the final output vector is compiled.

Stateful Context Partitioning Across Micro-Agents

Maintaining a cohesive thread of logic across prolonged autonomous operations presents a massive computational challenge, frequently categorized in academic literature as context exhaustion. OpenClaw addresses this fundamental limitation through an architectural pattern we term Stateful Context Partitioning. Rather than forcing a single model to juggle the entirety of a multi-day, thousands-of-tokens interaction history, the framework segments memory hierarchically into short-term buffers, semantic working memory, and long-term archival graph storage.

When an agent transitions between complex sub-routines or offloads a task to a peer node, its active context is mathematically serialized into a highly compressed intermediate representation. This state snapshot captures the essential entities, current operational objectives, and derived facts, effectively stripping away the raw conversational scaffolding and redundant syntactical noise. When a new agent inherits the execution thread, it deserializes this highly dense state payload, effectively achieving instantaneous context synchronization without incurring the massive token overhead and latency penalty of re-parsing enormous plain text logs.

This partitioning mechanism relies heavily on a specialized Key-Value Memory Graph deployed as a sidecar to every node. Each node within the graph represents a crystallized concept, a verified fact, or a specific user directive, while the edges mathematically denote semantic relationships and causal dependencies. The Cognitive Runtime queries this graph dynamically during inference, utilizing similarity search to inject only the localized sub-graph immediately relevant to the current algorithmic step into the active prompt context.

Local-First Vector Manifolds and Embeddings Retrieval

Enterprise artificial intelligence adoption is frequently bottlenecked by stringent data sovereignty, compliance frameworks, and privacy mandates. The OpenClaw architecture champions a radical local-first philosophy, ensuring that sensitive intellectual property, proprietary codebases, and classified financial data never traverse external, untrusted networks during the retrieval-augmented generation phase. This critical security posture is achieved through the deployment of isolated Vector Manifolds directly within the customer's secure, air-gapped intranet environments.

Unlike centralized, cloud-hosted vector databases that require continuous outbound synchronization and constant network availability, OpenClaw's Vector Manifolds operate as immutable, mathematically pure, version-controlled embeddings indices distributed at the edge. When internal documents are ingested, the localized embedding models map the unstructured data into high-dimensional latent space entirely on-premise. These local indices are then queried during inference with absolute zero external round-trips, yielding ultra-fast retrieval times and uncompromising data gravity.

To ensure synchronization across disparate local nodes without compromising data integrity or exposing raw information, we utilize a proprietary federated delta-sync protocol. Instead of transmitting raw text, document shards, or even raw high-dimensional vectors, the protocol only broadcasts the mathematical gradient shifts within the semantic index. This allows the global organizational knowledge base to evolve coherently and continuously while strictly enforcing mathematically verifiable privacy guarantees across all corporate network boundaries.

Powered by OpenClaw

The engine driving the next generation of autonomous enterprise AI. Secure, local-first, and highly scalable.

The Security Enclave: Ephemeral Tokenization and Sandbox Isolation

Executing shell commands, interacting with APIs, and deploying code generated by autonomous agents introduces severe, unprecedented vectors for remote code execution and lateral network movement. OpenClaw comprehensively mitigates these existential threats through a robust, zero-trust Security Enclave architecture, treating every single generated action or script as an inherently hostile payload. The execution tier is completely and irreversibly decoupled from the cognitive reasoning tier via strict air-gapped memory protocols and hardware-level virtualization layers.

All generated scripts, system commands, and network requests are routed instantaneously to an Ephemeral Sandbox—a highly restricted, single-use micro-virtual machine container spun up within milliseconds using optimized hypervisor technology. These sandboxes lack any network egress capabilities unless explicitly whitelisted via a multi-signature cryptographic approval workflow dictated by human operators. Furthermore, file system access is heavily mocked; the agent operates on a completely virtualized overlay file system that exists only in RAM and is instantly obliterated upon task completion or failure.

To manage access to internal enterprise APIs, databases, and microservices, OpenClaw employs Ephemeral Tokenization. Agents are never provisioned with long-lived credentials or static API keys. Instead, the Supervisor Daemon dynamically generates granular, time-bound, and scope-restricted JSON Web Tokens exactly at the millisecond of execution. Injecting policy shapes formatted strictly as { "role": "ephemeral_agent", "ttl": 300 } ensures precise blast radius control. If an agent mathematically hallucinates and attempts an operation outside the strict bounds of its immediate cryptographic directive, the API gateway violently rejects the payload, severs the connection, and dispatches the immutable audit trace to the centralized security information and event management console.

Scaling the Neural Bus: Event-Driven Actuator Orchestration

As the enterprise deployment scale increases exponentially from dozens to thousands of concurrent autonomous agents, synchronous task management and blocking input-output operations become computationally untenable, leading to catastrophic system lockups. OpenClaw fully embraces an asynchronous, event-driven Actuator Orchestration model to sustain massive transactional throughput. The system treats every internal state change, every user interaction, every memory mutation, and every API response as a discrete asynchronous event published globally to the Neural Bus.

Actuators—specialized, highly optimized micro-services strictly responsible for executing tangible side-effects like writing to a relational database, dispatching a webhook, or mutating cloud infrastructure—listen intently to specific, typed event topics on the bus. When a reasoning model determines that an external action is required, it does not execute the code directly. Instead, it emits a strongly typed intention payload to the bus. The corresponding Actuator independently validates the structural schema, applies strict enterprise rate limiting, and performs the execution asynchronously entirely outside the cognitive reasoning thread.

This radical decoupling provides immense architectural resilience and horizontal scalability. During unpredictable traffic spikes or complex incident response scenarios, the event queue absorbs the computational backpressure, preventing the delicate inferencing nodes from crashing under load. Additionally, it enables infinitely complex choreographies; a single intention event emitted by a worker node can trigger a massive cascade of secondary actuators—from comprehensive logging and compliance auditing to initializing downstream specialized agents—all orchestrated fluidly without ever blocking the primary analytical loop of the core intelligence framework.